How Log Correlation Actually Works Under the Hood

Picture your NOC at 11pm. Three engineers are watching dashboards. The monitoring platform has fired 1,400 alerts in the last hour. Somewhere in that noise, a slow memory leak on a critical app server is quietly building toward a crash that will take down a client’s ERP at 6am.

Nobody notices. Not because the engineers are not good at their jobs. Because the meaningful signal is buried under hundreds of low-priority pings about CPU fluctuations, disk warnings, and certificates expiring in 90 days.

That is not a staffing problem. That is an architecture problem. And the technology built to solve it is called a SIEM.

SIEM — Security Information and Event Management — is the platform that ingests logs from across an environment, makes sense of them, and surfaces the things that actually need human attention. Most people in IT know what a SIEM is. Fewer understand how the correlation engine inside it actually works. That gap matters, because the SIEM is only as useful as the logic running inside it — and that logic needs to be built, tuned, and maintained deliberately.

1. What a SIEM Actually Does — The Three Jobs

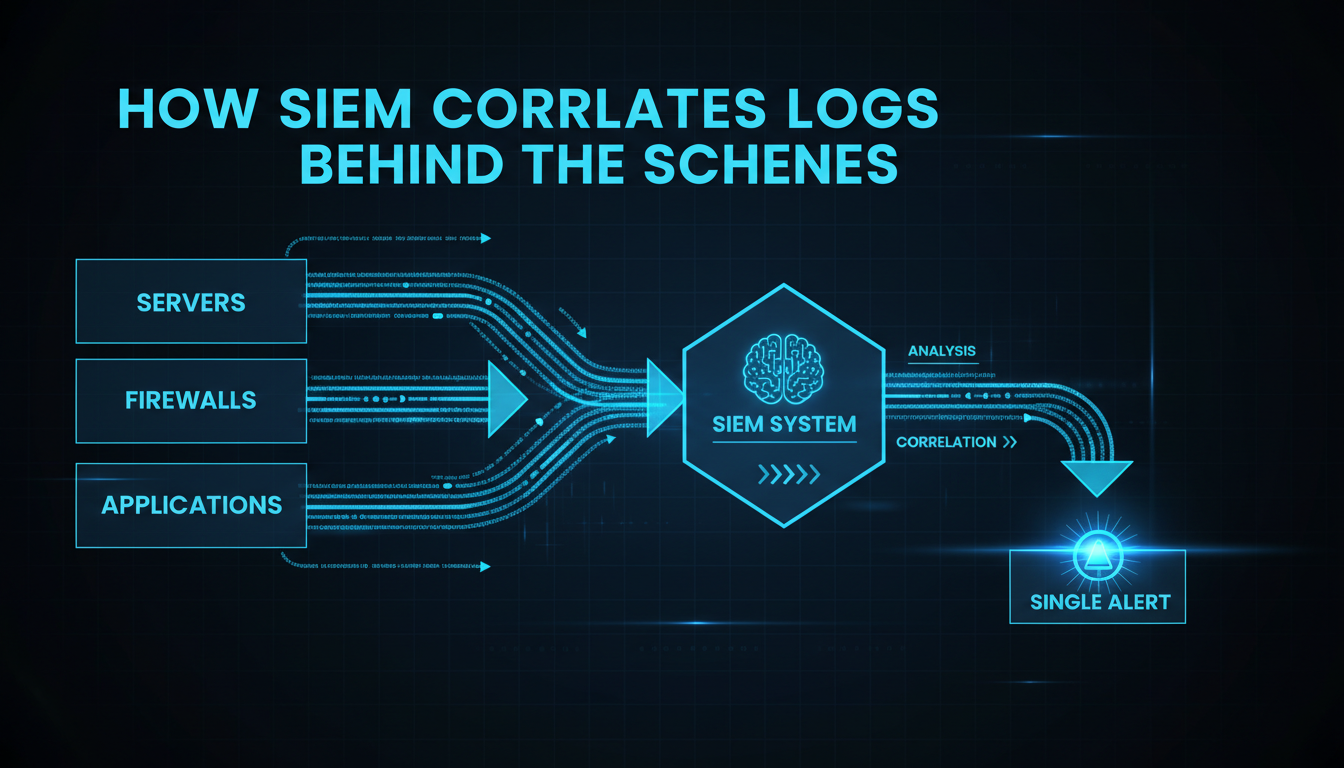

Strip away the marketing and a SIEM does three things: it collects logs from wherever they are generated, it applies detection logic to find meaningful patterns in those logs, and it presents the results to analysts in a way they can act on. Each job is harder than it sounds.

Collection

Every device, application, and service in a client environment generates logs — firewalls, domain controllers, endpoints, cloud platforms, email security gateways, Active Directory, VPNs. The SIEM needs to ingest all of them. Logs arrive in different formats: Windows Event Log, syslog, JSON from cloud APIs, custom application formats. A good SIEM has connectors for common sources and a parsing layer that normalises everything into a common data model so the correlation engine can work across them.

The critical point here: you cannot detect what you cannot see. A gap in log collection is a gap in detection. Before asking ‘why did the SIEM not catch this?’, the first question is always: ‘was the relevant log source even being ingested?’

Correlation

Raw logs are noise. A Windows domain controller generates 50,000 events per day. A firewall generates 300,000. Correlation is the process that turns that noise into signal — by finding patterns across events that individually mean nothing but together indicate something worth investigating.

This is where most of the technical sophistication in a SIEM lives, and it is worth understanding the different types of correlation logic in use.

Alerting and Investigation

When the correlation engine finds a pattern worth acting on, it creates an alert. A good alert is not just ‘something happened’ — it includes the relevant events, the enrichment context (what user, what asset, what geolocation, what threat intel says about the IP), and enough narrative for an analyst to understand what they are looking at without spending 20 minutes manually reconstructing it.

2. How Correlation Actually Works — The Five Types

This is the part that is usually glossed over in vendor documentation but matters enormously in practice. The type of correlation determines what threats get detected and what gets missed. Mature SIEM deployments use all five simultaneously.

| Correlation Type | How It Works | Best At Detecting |

| Rule-Based | IF/THEN logic: if X events happen within Y time window, fire alert. | Known attack patterns with clear signatures. Fast and reliable for covered scenarios. Example: 5 failed logins from the same IP in 2 minutes. |

| Threshold-Based | Alert when event count exceeds a defined number in a time window. | Volume anomalies — DoS attempts, data staging, brute-force. Can miss gradual ramp-up attacks. |

| Behavioural / ML | Learns what ‘normal’ looks like per user, host, or process. Flags statistical deviations. | Insider threats, slow lateral movement, novel techniques rules do not cover. Needs 4-6 weeks of baseline data before it produces useful signal. |

| Sequence-Based | Detects ordered chains of events across sources within a time window. Variables bind across stages. | Multi-stage attack chains. Closest to how a real investigation unfolds. High signal, higher compute cost. |

| Threat Intel Matching | Compares observables (IPs, domains, hashes) against external threat feed IOCs in real time. | Known bad infrastructure and C2 domains. High precision but decays fast — feeds must be fresh. |

The five correlation types — how each works and what it catches best

A few things worth calling out from that table. Rule-based correlation is fast and reliable but only covers what someone has already thought to write a rule for. Behavioural correlation is powerful against novel attacks but takes weeks to establish a meaningful baseline. Sequence-based correlation is what lets the SIEM see a full attack chain rather than five unrelated events — but it requires the most engineering effort to build well.

The teams that get the most out of a SIEM use rule-based and threat intel matching as the fast-response layer, and behavioural correlation as the slow-burn layer that catches the things rules miss. Neither alone is sufficient.

3. The Part No One Tells You — Why SIEM Quality Degrades

A SIEM that is deployed and then left alone will perform worse every month. This is not a product flaw — it is an operational reality that catches almost every team that does not plan for it.

Alert Fatigue is a Design Failure, Not a People Failure

When analysts start ignoring alerts, the instinct is to blame them for not being diligent enough. The real cause is almost always overly broad rules that fire on benign activity, combined with no feedback loop to tune them. If a rule fires 200 times a day with zero confirmed true positives, the rule is wrong — not the analyst who stopped caring about it.

The standard to aim for: every rule in production should have a documented true-positive rate. Any rule with a sustained false positive rate above 90% needs to be rewritten or retired. This sounds obvious. Most environments do not do it.

Silent Coverage Gaps Are the Real Risk

Alert fatigue is visible — you can see it in the metrics, hear it in how analysts talk about the queue. Silent coverage gaps are worse, because nobody is complaining. A log source that stopped forwarding three months ago shows nothing wrong on any dashboard. The only way to find it is to actively monitor ingestion health — alert when a source that should be sending data goes quiet for more than 15 minutes.

In environments we have helped MSPs build out, this ingestion health monitoring catches real gaps far more often than you would expect. A Windows agent update silently breaks log forwarding. A network change orphans a syslog collector. An EDR platform gets updated and the SIEM connector stops working. None of these produce obvious alerts. All of them create invisible blind spots.

The Tuning Cadence That Actually Works

The SIEM teams that maintain quality over time share one habit: they treat detection engineering as an ongoing operational function, not a project. Specifically:

- Weekly: Review the top-firing rules. Any rule that fired 100+ times with zero confirmed true positives gets tuned immediately.

- Weekly: Check ingestion health. Any source silent for more than 4 hours that should be active is a gap until proven otherwise.

- After every incident: Did the SIEM detect this? If not, write a new rule before the ticket closes. Convert every incident into a permanent detection improvement.

- Quarterly: Full rule review. Retire anything with zero true positives over 90 days. Validate coverage against the ATT&CK techniques most relevant to your client environments.

4. SIEM vs. XDR — Why It Is Not Either/Or

XDR (Extended Detection and Response) gets positioned as the SIEM replacement by every vendor selling it. The reality is more nuanced. XDR is excellent at fast, high-fidelity detection within its supported telemetry domains — endpoint, email, identity, cloud. It reduces alert noise significantly and requires less analyst tuning than a traditional SIEM.

What XDR typically does not do well: long-term log retention for compliance, custom detection logic for bespoke environments, or coverage of data sources outside the vendor’s ecosystem. A SIEM ingesting 47 different log sources, a legacy application, and three different cloud platforms will have visibility that no single XDR vendor can match.

| The Practical Answer for MSP Environments For clients with compliance logging requirements or complex multi-source environments: SIEM remains the right tool. XDR can sit alongside it as the primary analyst interface for fast triage. For clients without those requirements, where simplicity and low analyst overhead matter most: a well-configured XDR with vendor-managed detection content often outperforms a SIEM that nobody has time to tune. The honest truth: a mediocre XDR operated by an engaged team will outperform a premium SIEM that has not been touched since deployment. The platform matters less than the operational discipline. |

Final Thought

A SIEM is the closest thing to a nervous system that a security programme has. When it works well, it is the difference between catching a credential-stuffing campaign on day two and discovering a ransomware infection on day thirty.

But it only works well if someone is doing the operational work: monitoring ingestion health, tuning rules, feeding incident findings back into new detections. The teams that understand this — that a SIEM is an ongoing engineering discipline rather than a product you deploy and bill for — deliver meaningfully better security outcomes for their clients. That gap is where managed SOC operations earn their keep.

| About TechMonarch The ingestion health monitoring, tuning cadences, and incident-to-detection feedback loops described in this article are how our SOC team operates every day — across a diverse set of client environments. The SIEM gets better with every incident. That is the design. If you are an MSP looking for a white-label SOC partner who treats detection engineering as an ongoing discipline rather than a one-time setup, let’s talk. Get in touch: www.techmonarch.com |

Recent Comments

- No comments to show